If you've worked on browser-based apps before, you may be familiar with a tool called Lighthouse.

Lighthouse is an auditing tool that gives you a series of "scores" for various metrics, e.g. Accessibility, Performance, SEO. It's available in chrome devtools and can also be run via CLI (command line interface).

In this post we're going to focus on how Lighthouse measures performance and how that differs from other tools.

Lighthouse in devtools

Lighthouse runs your site to calculate metrics and judge how performant it is. However, there are different ways to run Lighthouse reports and Lighthouse itself provides different modes!

Devtools throttling (sometimes referred to as request-level throttling)

In this mode, Lighthouse attempts to mimic your site behavior on a slow device. Lighthouse accomplishes this by throttling the connection and cpu, replicating something like a nexus 4g on a slow 4g connection. They do this via the Chrome browser (this is a google tool, so it's only testing on the google browser). While this helps test site performance on a slow device it isn't an exact simulation. That's because this "slowness" is relative to the speed of your local device.

If you're running a high powered Mac with a really strong internet connection it's going to register a better score than running the same simulation using an older mobile device.

Simulated throttling

The aim of this mode is the same as devtools throttling, mimic your site behavior on a slow device/connection. However, Lighthouse runs against a fast device and then calculates what experience a slow device would have. We'll dive into this more in the next section on Page Speed Insights.

Packet-level throttling

In this mode Lighthouse does not throttle and expects that the operating system is doing it. We'll explain this mode more in the section on Webpage Test.

What is interesting about these modes is that depending on which tool you're using to access Lighthouse reports, you may be running a different mode.

By default, running a Lighthouse audit in chrome devtools uses the first mode. Running via the chrome extension uses the second. The CLI version of Lighthouse allows you to pass a flag, throttling-method, to specify which mode you'd like to use. It uses simulated throttling by default.

Page Speed Insights

Page Speed Insights (PSI) is another Google-provided tool. It uses the simulated throttling mentioned above.

Page Speed Insights (PSI) is another Google-provided tool. It uses the simulated throttling mentioned above.

PSI uses lab data which means it runs against Google servers instead of being influenced by the specs of your local machine. It gets metrics using a fast device and then artificially calculates what a slow device would experience. This is the fastest way, of the three throttling methods above, to calculate performance metrics.

Why does it matter if it's fast? Well, PSI is run for millions of pages in order to support SEO. We'll talk about that at the end.

But because of this, the calculations need to be fast rather than perfect. So this multiplier is easier than devtools throttling and typically just as accurate or better. Note that it can be worse in certain edge cases.

Another thing about PSI is that, for some sites, you can get a CRUX (Chrome User Experience Report). This is a report that uses real user monitoring (RUM) and bases the page metrics on how real users interact with a page. This is the most accurate type of data and produces the metrics that most directly reflect user experience of performance.

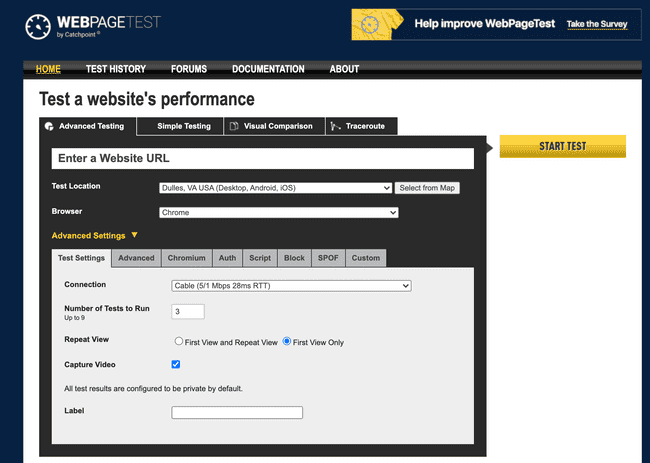

Webpage Tests

The last automated performance tool is webpage test. This tool uses packet-level throttling which means it runs performance benchmarks against real hardware in a real location. As a result, it isn't influenced by your local machine the way devtools throttling is.

It simulates the connection, but it does so at the operating system level, making it more accurate. However, it can also introduce more variance.

Why does this matter?

It seems like there are a lot of tools to test performance, but why does this matter? Do milliseconds really make a difference?

Well, Google is an ecosystem. And most of us are familiar with it because of Google search. Ranking highly on google search is important for a lot of websites. Per Google, site performance impacts a site's ranking.

Specifically, site performance and its impact on ranking is based on core web vitals. So we'll talk about that in the next post.